Menu

When I was at Groupon, there was an entire consumer insights department. Smart people. Deep research. Genuinely interesting findings.

They had built out these detailed personas: demographics, purchase profile, what they care about, how they make decisions, doubts, etc.

Real depth.

Meanwhile, I was on the growth marketing team building customer lifecycle emails. We were sending a lot of emails (4 per day!). Emails based on your last purchase. Emails with Groupons that were trending. Seasonal emails. Winback emails. Forgot something in your cart emails.

But not one email mapped back to what the consumer insights team had actually learned about consumers. The research was sitting in a deck somewhere. The emails were going out anyway. Two teams, same company, zero connection.

And for a long time, I couldn't figure out why. They were doing everything right: surveys, interviews, patterns, synthesis. Beautiful charts, decks.

But nothing changed. No decisions got made differently. No positioning shifted. The research just... sat there.

That’s when I realized: those weren’t insights. They were information. And that’s not the same thing.

What they were producing wasn't useless, it just wasn't decision-grade.

An insight is something you learn that changes how your entire company goes to market.

Not "interesting to know." Not "good for the deck." Changes. How your entire company. Goes to market.

By that definition? Most "insights" aren't insights.

It's causal. It explains why someone changed their behavior. Not what they liked, not what they preferred. What actually moved them. This is why buyer research is not the same thing as customer research because your buyers haven’t bought yet.

It's specific. It couldn't apply to any other company. Those buyer conversations you’re having are similar to the buyer conversations your competitor is having, not specific to you. So what you’re learning could apply to you and three competitors.

It's surprising. Not shocking, but "huh, I didn't expect that."

And it's emotionally loaded. There's relief under there, or fear, or pride, or frustration. Something with weight that reflects the human condition.

Here's what most research tools get wrong.

They give you patterns. They give you themes. Things that came up multiple times. They even give you quotes to back it up. Then they slap the word “insight” on top.

But frequency isn't causation.

Just because five customers said "it saves time" doesn't mean time-saving is what caused them to buy. It means five customers gave you the easiest possible answer to your question. It sounds like insight, but it contains zero useful information.

"It saves time"…okay. Time doing what? Which part? What were you doing before that took longer? What specifically changed?

Most research conversations never get there. And now AI tools make things worse.

Try feeding AI your call transcripts and you’ll find they give you dozens of "insights" without anything to back it up other than pattern recognition and quotes. Just because something came up multiple times doesn't necessarily make it a decision insight!

And yet, this is how companies today are making decisions. That’s so cray to me.

So I built Aha PMM to separate real decision insight from organized noise.

Aha PMM finds, organizes, and scores the insights buried in your customer and prospect conversations that changes how your entire company goes to market.

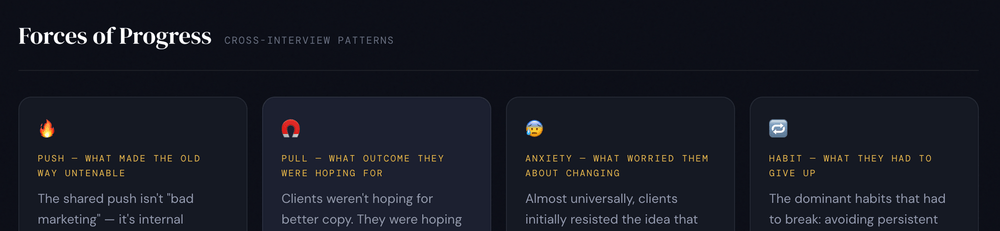

It starts by mapping the four forces behind every purchase before looking for a single insight.

Push: what made the old way unbearable.

Pull: what outcome they were imagining.

Anxiety: what almost stopped them.

Habit: what they had to give up.

The real insight is usually not in the polished answer, it’s in the tension.

Anxiety and Habit are where the real stuff lives because customers don't volunteer those…you really have to probe.

Then it looks at how the interview was conducted. Did the interviewer probe past the surface answer? Did they lead the witness? Did they stop at "easy to use" or did they go three levels deep until they got to something causal? If not, flag it.

Bad data produces misleading insights. That's worse than no data. I wonder how many of the Gong calls you’re using as research would pass this flag? 👀

Then it classifies what kind of insight you're actually looking at. There are 10 types including Value Perception Gap, Real Job-to-be-Done, and Unexpected Outcome. The one everyone overlooks? Emotional Transformation: when the product didn't just change the workflow, it changed who the person is at work (their identity or role). That's a completely different message than "saves time."

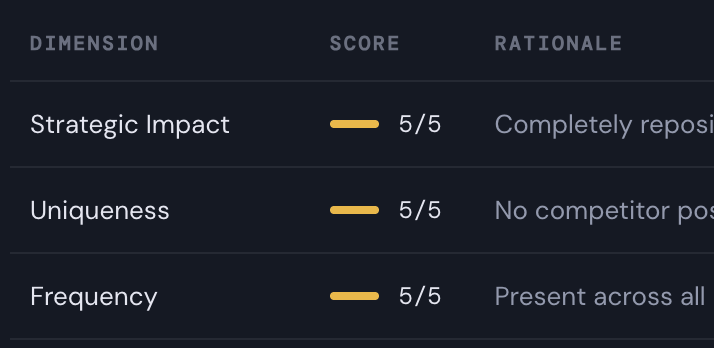

And finally, it scores the insight. Six dimensions including strategic impact and uniqueness, thirty points max. That score is what lets you walk into a cross-functional meeting and say “the score is 28 out of 30. That's why we're leading with it. Because customers told us.”

Most teams don’t lack data. They lack clarity on what matters.

They’ve got recordings, call notes, quotes, and patterns, but don't know what to do next. That's the gap, but it can be fixed.

That’s why I built Aha PMM.

Aha PMM finds, organizes, and scores the insights buried in customer and prospect conversations so PMMs can stop arguing from instinct and start making decisions they can back up.

Because an insight is only an insight if it changes a decision.

Everything else is just information, sitting in a deck somewhere.

Wanna try out Aha PMM? All you need are conversation transcripts. Reach out and let me know on LinkedIn or email me: anna@furmanovmarketing.com.

Top 5% podcast exploring the role that customers play in helping companies build to their next level of growth. 250+ episodes, 4.8 stars.

One Insight is a newsletter that highlights one unique insight I’ve uncovered, been thinking about, or seen in the wild. It usually makes me go "woah."